Quantum Monte Carlo

Table of Contents

- 1. Introduction

- 2. Numerical evaluation of the energy of the hydrogen atom

- 3. Variational Monte Carlo

- 4. Diffusion Monte Carlo

- 5. Project

- 6. Acknowledgments

1. Introduction

This website contains the QMC tutorial of the 2023 LTTC winter school Tutorials in Theoretical Chemistry.

We propose different exercises to understand quantum Monte Carlo (QMC) methods. In the first section, we start with the computation of the energy of a hydrogen atom using numerical integration. The goal of this section is to familiarize yourself with the concept of local energy. Then, we introduce the variational Monte Carlo (VMC) method which computes a statistical estimate of the expectation value of the energy associated with a given wave function, and apply this approach to the hydrogen atom. Finally, we present the diffusion Monte Carlo (DMC) method which we use here to estimate the exact energy of the hydrogen atom and of the H2 molecule, starting from an approximate wave function.

Code examples will be given in Python3, C and Fortran. You can use whatever language you prefer to write the programs.

We consider the stationary solution of the Schrödinger equation, so the wave functions considered here are real: for an \(N\) electron system where the electrons move in the 3-dimensional space, \(\Psi : \mathbb{R}^{3N} \rightarrow \mathbb{R}\). In addition, \(\Psi\) is defined everywhere, continuous, and infinitely differentiable.

All the quantities are expressed in atomic units (energies, coordinates, etc).

1.1. Energy and local energy

For a given system with Hamiltonian \(\hat{H}\) and wave function \(\Psi\), we define the local energy as

\[ E_L(\mathbf{r}) = \frac{\hat{H} \Psi(\mathbf{r})}{\Psi(\mathbf{r})}, \]

where \(\mathbf{r}\) denotes the 3N-dimensional electronic coordinates.

The electronic energy of a system, \(E\), can be rewritten in terms of the local energy \(E_L(\mathbf{r})\) as

\begin{eqnarray*} E & = & \frac{\langle \Psi| \hat{H} | \Psi\rangle}{\langle \Psi |\Psi \rangle} = \frac{\int \Psi(\mathbf{r})\, \hat{H} \Psi(\mathbf{r})\, d\mathbf{r}}{\int |\Psi(\mathbf{r}) |^2 d\mathbf{r}} \\ & = & \frac{\int |\Psi(\mathbf{r})|^2\, \frac{\hat{H} \Psi(\mathbf{r})}{\Psi(\mathbf{r})}\,d\mathbf{r}}{\int |\Psi(\mathbf{r}) |^2 d\mathbf{r}} = \frac{\int |\Psi(\mathbf{r})|^2\, E_L(\mathbf{r})\,d\mathbf{r}}{\int |\Psi(\mathbf{r}) |^2 d\mathbf{r}} \end{eqnarray*}For few dimensions, one can easily compute \(E\) by evaluating the integrals on a grid but, for a high number of dimensions, one can resort to Monte Carlo techniques to compute \(E\).

To this aim, recall that the probabilistic expected value of an arbitrary function \(f(x)\) with respect to a probability density function \(P(x)\) is given by

\[ \langle f \rangle_P = \int_{-\infty}^\infty f(x)\, P(x) dx \]

where the probability density function \(P(x)\) is non-negative and integrates to one:

\[ \int_{-\infty}^\infty P(x)\,dx = 1. \]

Similarly, we can view the energy of a system, \(E\), as the expected value of the local energy with respect to a probability density \(P(\mathbf{r})\) defined in \(3N\) dimensions:

\[ E = \int E_L(\mathbf{r})\, P(\mathbf{r})\,d\mathbf{r} \equiv \langle E_L \rangle_{P}\,, \]

where the probability density is given by the square of the wave function:

\[ P(\mathbf{r}) = \frac{|\Psi(\mathbf{r})|^2}{\int |\Psi(\mathbf{r})|^2 d\mathbf{r}}\,. \]

If we can sample \(N_{\rm MC}\) configurations \(\{\mathbf{r}\}\) distributed as \(P\), we can estimate \(E\) as the average of the local energy computed over these configurations:

\[ E \approx \frac{1}{N_{\rm MC}} \sum_{i=1}^{N_{\rm MC}} E_L(\mathbf{r}_i) \,. \]

Here is an animation of the local energy in the N2 molecule along a QMC trajectory:

2. Numerical evaluation of the energy of the hydrogen atom

In this section, we consider the hydrogen atom with the following wave function:

\[ \Psi(\mathbf{r}) = \exp(-a |\mathbf{r}|) \]

We will first verify that, for a particular value of \(a\), \(\Psi\) is an eigenfunction of the Hamiltonian

\[ \hat{H} = \hat{T} + \hat{V} = - \frac{1}{2} \Delta - \frac{1}{|\mathbf{r}|} \]

To do that, we will compute the local energy and check whether it is constant.

2.1. Local energy

You will now program all quantities needed to compute the local energy of the Hydrogen atom for the given wave function.

Write all the functions of this section in a single file :

hydrogen.py if you use Python, hydrogen.F90 if you use

Fortran, or hydrogen.c and hydrogen.h if you use C.

- When computing a square root in \(\mathbb{R}\), always make sure that the argument of the square root is non-negative.

- When you divide, always make sure that you will not divide by zero

If a floating-point exception can occur, you should make a test to catch the error.

2.1.1. Test-driven development

Test-driven development (TDD) is a software development process in which automated tests are written before the code they are testing. The tests are then run to ensure that the code is working as expected, and the developer continues to write code until all tests pass. The benefits of TDD include:

- Early detection of bugs: By writing tests before the code, developers can identify and fix bugs early on in the development process, before the code becomes too complex to easily debug.

- Improved code quality: Writing tests forces developers to think about the behavior and functionality of the code they are writing, leading to more robust and maintainable code.

- Better documentation: Tests act as a form of documentation for the code, making it clear what the code is supposed to do and how it should behave.

2.1.2. Exercise 1

Write a function called potential that takes a single argument

r, which is an array of 3 double precision numbers. The function

calculates the distance from the origin using the Euclidean

distance formula, and then calculates the potential using this

distance.

If the distance is 0, the program prints a warning that the

potential diverges.

If the distance is greater than 0, the potential is calculated as

\[ V(\mathbf{r}) = -\frac{1}{\sqrt{x^2 + y^2 + z^2}}, \text{ with } \mathbf{r}=\left( \begin{array}{c} x \\ y\\ z\end{array} \right), \]

- Python

#!/usr/bin/env python3 import numpy as np def potential(r): # TODO def test_potential(): expected_output = -1./15. for r in [( 2., 5., 14.), (5., 14., 2.), (-2., 5.,-14.), (5.,-14.,-2.), ( 0., 9.,-12.), (9.,-12., 0.)]: assert potential(r) == expected_output r = (0., 0., 0.) assert potential(r) == -float("inf") print("potential ok") if __name__ == "__main__": test_potential()

- Fortran

If the extension of your file is

.F90and not.f90, the C preprocessor will be called before compiling. This enables the possibility to have conditional compilation with#ifdefstatements, activated with the-Dcompiler option.To compile your code and activate the test, use:

gfortran -DTEST_H hydrogen.F90 -o test_hydrogen

double precision function potential(r) implicit none double precision, intent(in) :: r(3) ! TODO end function potential subroutine test_potential implicit none double precision :: r(3) double precision :: expected_output double precision, external :: potential expected_output = -1.d0/15.d0 r(:) = (/ 2.d0, 5.d0, 14.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 5.d0, 14.d0, 2.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ -2.d0, 5.d0, -14.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 5.d0, -14.d0, -2.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 0.d0, 9.d0, 12.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 9.d0, -12.d0, 0.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = 0.d0 expected_output = -huge(1.d0) if (potential(r) /= expected_output) stop 'Failed r=0' print *, 'potential ok' end subroutine test_potential #ifdef TEST_H program test_h call test_potential end program test_h #endif

- C

Compile your code with the

-DTEST_Hoption. It will activate the creation of the main function that will test your functions. Don't forget to use-lmto link with the math library.gcc -DTEST_H hydrogen.c -lm -o test_hydrogen

#include <stdio.h> // printf #include <math.h> // sqrt #include <stdlib.h> // exit #include <assert.h> // assert double potential(double r[3]) { // TODO } static void test_potential() { double r[3]; double expected_output; expected_output = -1./15.; r[0] = 2.; r[1] = 5.; r[2] = 14.; assert (potential(r) == expected_output); r[0] = 5.; r[1] = 14.; r[2] = 2.; assert (potential(r) == expected_output); r[0] = -2.; r[1] = 5.; r[2] = -14.; assert (potential(r) == expected_output); r[0] = 5.; r[1] = -14.; r[2] = -2.; assert (potential(r) == expected_output); r[0] = 0.; r[1] = 9.; r[2] = 12.; assert (potential(r) == expected_output); r[0] = 9.; r[1] = -12.; r[2] = 0.; assert (potential(r) == expected_output); expected_output = -HUGE_VAL; r[0] = 0.; r[1] = 0.; r[2] = 0.; assert (potential(r) == expected_output); printf("potential ok\n"); } #ifdef TEST_H int main() { test_potential(); return 0; } #endif

2.1.3. Solution solution

- Python

#!/usr/bin/env python3 import numpy as np def potential(r): distance = np.sqrt(np.dot(r,r)) if distance == 0: print("potential at r=0 diverges") return -float("inf") return -1. / distance def test_potential(): expected_output = -1./15. for r in [( 2., 5., 14.), (5., 14., 2.), (-2., 5.,-14.), (5.,-14.,-2.), ( 0., 9.,-12.), (9.,-12., 0.)]: assert potential(r) == expected_output r = (0., 0., 0.) assert potential(r) == -float("inf") print("potential ok") if __name__ == "__main__": test_potential()

- Fortran

double precision function potential(r) implicit none double precision, intent(in) :: r(3) double precision :: distance distance = dsqrt( r(1)*r(1) + r(2)*r(2) + r(3)*r(3) ) if (distance > 0.d0) then potential = -1.d0 / distance else print *, 'Warning: potential at r=0.d0 diverges' potential = -huge(1.d0) end if end function potential subroutine test_potential implicit none double precision :: r(3) double precision :: expected_output double precision, external :: potential expected_output = -1.d0/15.d0 r(:) = (/ 2.d0, 5.d0, 14.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 5.d0, 14.d0, 2.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ -2.d0, 5.d0, -14.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 5.d0, -14.d0, -2.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 0.d0, 9.d0, 12.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = (/ 9.d0, -12.d0, 0.d0 /) if (potential(r) /= expected_output) stop 'Failed' r(:) = 0.d0 expected_output = -huge(1.d0) if (potential(r) /= expected_output) stop 'Failed r=0' print *, 'potential ok' end subroutine test_potential #ifdef TEST_H program test_h call test_potential end program test_h #endif

- C

#include <stdio.h> // printf #include <math.h> // sqrt #include <stdlib.h> // exit #include <assert.h> // assert double potential(double r[3]) { double distance; distance = sqrt(r[0]*r[0] + r[1]*r[1] + r[2]*r[2]); if (distance > 0) { return -1.0 / distance; } else { printf("Warning: potential at r=0 diverges\n"); return -HUGE_VAL; } } static void test_potential() { double r[3]; double expected_output; expected_output = -1./15.; r[0] = 2.; r[1] = 5.; r[2] = 14.; assert (potential(r) == expected_output); r[0] = 5.; r[1] = 14.; r[2] = 2.; assert (potential(r) == expected_output); r[0] = -2.; r[1] = 5.; r[2] = -14.; assert (potential(r) == expected_output); r[0] = 5.; r[1] = -14.; r[2] = -2.; assert (potential(r) == expected_output); r[0] = 0.; r[1] = 9.; r[2] = 12.; assert (potential(r) == expected_output); r[0] = 9.; r[1] = -12.; r[2] = 0.; assert (potential(r) == expected_output); expected_output = -HUGE_VAL; r[0] = 0.; r[1] = 0.; r[2] = 0.; assert (potential(r) == expected_output); printf("potential ok\n"); } #ifdef TEST_H int main() { test_potential(); return 0; } #endif

2.1.4. Exercise 2

Write a function called psi that takes two arguments: a which is a

double precision number, and r which is an array of 3 double

precision numbers. The function calculates and returns the value of the wave

function at the given point \(\mathbf{r}\).

2.1.5. Solution solution

- Python

def psi(a, r): return np.exp(-a*np.sqrt(np.dot(r,r)))

- Fortran

double precision function psi(a, r) implicit none double precision, intent(in) :: a, r(3) psi = dexp(-a * dsqrt( r(1)*r(1) + r(2)*r(2) + r(3)*r(3) )) end function psi

- C

double psi(double a, double r[3]) { double distance; distance = sqrt(r[0]*r[0] + r[1]*r[1] + r[2]*r[2]); return exp(-a * distance); }

2.1.6. Exercise 3

Write a function called kinetic that takes two arguments: a

which is a double precision number, and r which is an array of 3

double precision numbers. The function calculates the local kinetic energy

at the given point \(\mathbf{r}\).

It first calculates the distance from the origin using the Euclidean distance formula. If the distance is greater than 0, the kinetic energy is calculated using the formula given below. If the distance is 0, the program prints a warning.

The local kinetic energy is defined as \[T_L(\mathbf{r}) = -\frac{1}{2}\frac{\Delta \Psi(\mathbf{r})}{\Psi(\mathbf{r})}.\]

We differentiate \(\Psi\) with respect to \(x\):

\[ \Psi(\mathbf{r}) = \exp(-a\,|\mathbf{r}|) \] \[\frac{\partial \Psi}{\partial x} = \frac{\partial \Psi}{\partial |\mathbf{r}|} \frac{\partial |\mathbf{r}|}{\partial x} = - \frac{a\,x}{|\mathbf{r}|} \Psi(\mathbf{r}) \]

and we differentiate a second time:

\[ \frac{\partial^2 \Psi}{\partial x^2} = \left( \frac{a^2\,x^2}{|\mathbf{r}|^2} - \frac{a(y^2+z^2)}{|\mathbf{r}|^{3}} \right) \Psi(\mathbf{r}). \]

The Laplacian operator \(\Delta = \frac{\partial^2}{\partial x^2} + \frac{\partial^2}{\partial y^2} + \frac{\partial^2}{\partial z^2}\) applied to the wave function gives:

\[ \Delta \Psi (\mathbf{r}) = \left(a^2 - \frac{2a}{\mathbf{|r|}} \right) \Psi(\mathbf{r})\,. \]

Therefore, the local kinetic energy is \[ T_L (\mathbf{r}) = -\frac{1}{2}\left(a^2 - \frac{2a}{\mathbf{|r|}} \right) \]

2.1.7. Solution solution

- Python

def kinetic(a,r): distance = np.sqrt(np.dot(r,r)) if distance > 0: dinv = 1./distance else: print ('Warning: kinetic energy diverges at r=0') dinv = float("inf") return a * (dinv - 0.5 * a)

- Fortran

double precision function kinetic(a,r) implicit none double precision, intent(in) :: a, r(3) double precision :: distance distance = dsqrt( r(1)*r(1) + r(2)*r(2) + r(3)*r(3) ) if (distance > 0.d0) then kinetic = a * (1.d0 / distance - 0.5d0 * a) else print *, 'Warning: kinetic energy diverges at r=0' kinetic = a * (huge(1.d0) - 0.5d0 * a) end if end function kinetic

- C

double kinetic(double a, double r[3]) { double distance; distance = sqrt(r[0]*r[0] + r[1]*r[1] + r[2]*r[2]); if (distance > 0) { return a * (1.0 / distance - 0.5 * a); } else { printf("Warning: kinetic energy diverges at r=0\n"); return a * (HUGE_VAL - 0.5 * a); } }

2.1.8. Exercise 4

Write a function called e_loc that takes two arguments: a

which is a double precision number and r which is an array of 3

double precision numbers. The function calculates the local energy at

the given point \(\mathbf{r}\).

It uses two functions kinetic and potential to calculate the

kinetic energy and potential energy respectively and add them to

get the local energy.

\[ E_L(\mathbf{r}) = -\frac{1}{2} \frac{\Delta \Psi}{\Psi} (\mathbf{r}) + V(\mathbf{r}) \]

- Python

def e_loc(a,r): #TODO

- Fortran

When you call a function in Fortran, you need to declare its return type. You might by accident choose a function name which is the same as an internal function of Fortran. So it is recommended to always use the keyword

externalto make sure the function you are calling is yours.double precision function e_loc(a,r) implicit none double precision, intent(in) :: a, r(3) double precision, external :: kinetic double precision, external :: potential ! TODO end function e_loc

- C

double e_loc(double a, double r[3]) { // TODO }

2.1.9. Solution solution

- Python

def e_loc(a,r): return kinetic(a,r) + potential(r)

- Fortran

double precision function e_loc(a,r) implicit none double precision, intent(in) :: a, r(3) double precision, external :: kinetic double precision, external :: potential e_loc = kinetic(a,r) + potential(r) end function e_loc

- C

double e_loc(double a, double r[3]) { return kinetic(a, r) + potential(r); }

2.1.10. Exercise 5

Find the theoretical value of \(a\) for which \(\Psi\) is an eigenfunction of \(\hat{H}\).

2.1.11. Solution solution

\(a=1\) cancels the \(1/|r|\) term, and makes the energy constant and equal to -0.5 atomic units.

2.2. Plot of the local energy along the \(x\) axis

The program you will write in this section will be written in

another file (plot_hydrogen.py, plot_hydrogen.F90 or plot_hydrogen.c for

example).

It will use the functions previously defined.

If you use C, don't forget to write the header file corresponding

to the functions defined in the previous section.

In Python, you should put at the beginning of the file

#!/usr/bin/env python3 from hydrogen import e_loc

to be able to use the e_loc function of the hydrogen.py file.

It is better to use #!/usr/bin/env python3 than

#!/usr/bin/python because:

- you are sure you are not using Python2, which is incompatible with Python3 syntax,

- if you are on a machine where you can load different

environments (VirtualEnv, module, etc), you will use the

python3provided by your environment, and not the system's one.

In Fortran, you will need to compile all the source files together:

gfortran hydrogen.F90 plot_hydrogen.F90 -o plot_hydrogen

Similarly, in C

gcc hydrogen.c plot_hydrogen.c -lm -o plot_hydrogen

In C, you need the -lm argument to link with the math library

that contains functions like sqrt and exp.

2.2.1. Exercise

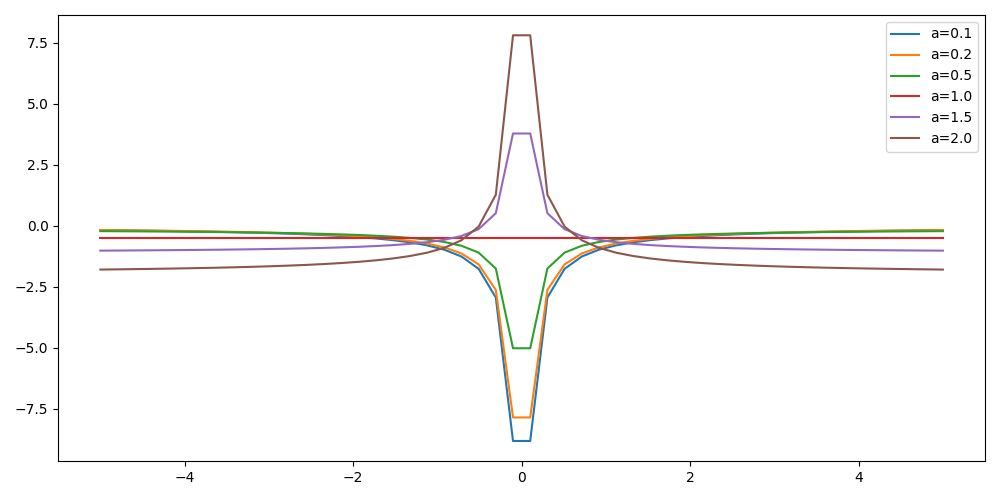

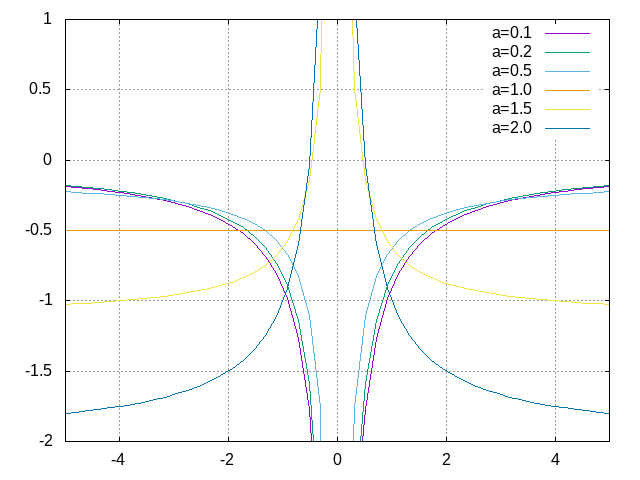

For multiple values of \(a\) (0.1, 0.2, 0.5, 1., 1.5, 2.), plot the local energy along the \(x\) axis.

In Python, you can use matplotlib for example.

In Fortran, it is convenient to write in a text file the values of \(x\) and \(E_L(\mathbf{r})\) for each point, and use Gnuplot to plot the files. With Gnuplot, you will need 2 blank lines to separate the data corresponding to different values of \(a\):

The potential and the kinetic energy both diverge at \(r=0\), so we choose a grid that doesn't contain the origin to avoid numerical issues.

- Python

#!/usr/bin/env python3 import numpy as np import matplotlib.pyplot as plt from hydrogen import e_loc x=np.linspace(-5,5) plt.figure(figsize=(10,5)) # TODO plt.tight_layout() plt.legend() plt.savefig("plot_py.png")

- Fortran

program plot implicit none double precision, external :: e_loc double precision :: x(50), dx integer :: i, j dx = 10.d0/(size(x)-1) do i=1,size(x) x(i) = -5.d0 + (i-1)*dx end do ! TODO end program plot

To compile and run:

gfortran hydrogen.F90 plot_hydrogen.F90 -o plot_hydrogen ./plot_hydrogen > data

- C

#include <stdio.h> #include <math.h> #include "hydrogen.h" #define NPOINTS 50 #define NEXPO 6 int main() { double x[NPOINTS], energy, dx, r[3]; double a[NEXPO] = { 0.1, 0.2, 0.5, 1.0, 1.5, 2.0 }; int i, j; dx = 10.0/(NPOINTS-1); for (i = 0; i < NPOINTS; i++) { x[i] = -5.0 + i*dx; } // TODO return 0; }

To compile and run:

gcc hydrogen.c plot_hydrogen.c -lm -o plot_hydrogen ./plot_hydrogen > data

Plotting for Fortran of C

Plot the data using Gnuplot:

set grid set xrange [-5:5] set yrange [-2:1] plot './data' index 0 using 1:2 with lines title 'a=0.1', \ './data' index 1 using 1:2 with lines title 'a=0.2', \ './data' index 2 using 1:2 with lines title 'a=0.5', \ './data' index 3 using 1:2 with lines title 'a=1.0', \ './data' index 4 using 1:2 with lines title 'a=1.5', \ './data' index 5 using 1:2 with lines title 'a=2.0'

2.2.2. Solution solution

- Python

#!/usr/bin/env python3 import numpy as np import matplotlib.pyplot as plt from hydrogen import e_loc x=np.linspace(-5,5) plt.figure(figsize=(10,5)) for a in [0.1, 0.2, 0.5, 1., 1.5, 2.]: y=np.array([ e_loc(a, np.array([t,0.,0.]) ) for t in x]) plt.plot(x,y,label=f"a={a}") plt.tight_layout() plt.legend() plt.savefig("plot_py.png")

- Fortran

program plot implicit none double precision, external :: e_loc double precision :: x(50), energy, dx, r(3), a(6) integer :: i, j a = (/ 0.1d0, 0.2d0, 0.5d0, 1.d0, 1.5d0, 2.d0 /) dx = 10.d0/(size(x)-1) do i=1,size(x) x(i) = -5.d0 + (i-1)*dx end do r(:) = 0.d0 do j=1,size(a) print *, '# a=', a(j) do i=1,size(x) r(1) = x(i) energy = e_loc( a(j), r ) print *, x(i), energy end do print *, '' print *, '' end do end program plot

- C

double potential (double r[3]); double psi (double a, double r[3]); double kinetic (double a, double r[3]); double e_loc (double a, double r[3]);

#include <stdio.h> #include <math.h> #include "hydrogen.h" #define NPOINTS 50 #define NEXPO 6 int main() { double x[NPOINTS], energy, dx, r[3]; double a[NEXPO] = { 0.1, 0.2, 0.5, 1.0, 1.5, 2.0 }; int i, j; dx = 10.0/(NPOINTS-1); for (i = 0; i < NPOINTS; i++) { x[i] = -5.0 + i*dx; } for (i = 0; i < 3; i++) { r[i] = 0.0; } for (j = 0; j < NEXPO; j++) { printf("# a=%f\n", a[j]); for (i = 0; i < NPOINTS; i++) { r[0] = x[i]; energy = e_loc(a[j], r); printf("%f %f\n", x[i], energy); } printf("\n\n"); } return 0; }

2.3. Numerical estimation of the energy

If the space is discretized in small volume elements \(\mathbf{r}_i\) of size \(\delta \mathbf{r}\), the expression of \(\langle E_L \rangle_{\Psi^2}\) becomes a weighted average of the local energy, where the weights are the values of the square of the wave function at \(\mathbf{r}_i\) multiplied by the volume element:

\[ \langle E \rangle_{\Psi^2} \approx \frac{\sum_i w_i E_L(\mathbf{r}_i)}{\sum_i w_i}, \;\; w_i = \left|\Psi(\mathbf{r}_i)\right|^2 \delta \mathbf{r} \]

The energy is biased because:

- The volume elements are not infinitely small (discretization error)

- The energy is evaluated only inside the box (incompleteness of the space)

2.3.1. Exercise

Compute a numerical estimate of the energy using a grid of \(50\times50\times50\) points in the range \((-5,-5,-5) \le \mathbf{r} \le (5,5,5)\).

- Python

#!/usr/bin/env python3 import numpy as np from hydrogen import e_loc, psi interval = np.linspace(-5,5,num=50) delta = (interval[1]-interval[0])**3 r = np.array([0.,0.,0.]) for a in [0.1, 0.2, 0.5, 0.9, 1., 1.5, 2.]: # TODO print(f"a = {a} \t E = {E}")

- Fortran

program energy_hydrogen implicit none double precision, external :: e_loc, psi double precision :: x(50), w, delta, energy, dx, r(3), a(6), normalization integer :: i, k, l, j a = (/ 0.1d0, 0.2d0, 0.5d0, 1.d0, 1.5d0, 2.d0 /) dx = 10.d0/(size(x)-1) do i=1,size(x) x(i) = -5.d0 + (i-1)*dx end do do j=1,size(a) ! TODO print *, 'a = ', a(j), ' E = ', energy end do end program energy_hydrogen

To compile the Fortran code and run it:

gfortran hydrogen.F90 energy_hydrogen.F90 -o energy_hydrogen ./energy_hydrogen

- C

#include <stdio.h> #include <math.h> #include "hydrogen.h" #define NPOINTS 50 #define NEXPO 6 int main() { double x[NPOINTS], energy, dx, r[3], delta, normalization, w; double a[NEXPO] = { 0.1, 0.2, 0.5, 1.0, 1.5, 2.0 }; dx = 10.0/(NPOINTS-1); for (int i = 0; i < NPOINTS; i++) { x[i] = -5.0 + i*dx; } for (int j = 0; j < NEXPO; j++) { // TODO printf("a = %f E = %f\n", a[j], energy); } }

To compile the C code and run it:

gcc hydrogen.c energy_hydrogen.c -lm -o energy_hydrogen ./energy_hydrogen

Hints if you are stuck

The program starts by defining some variables and arrays, including an array

athat contains 6 different values of the parameterawhich will be used in thee_locandpsifunctions to calculate the local energy and wave function respectively.The program then calculates the value of

dx, which is the step size in \(x\), and sets up an arrayxthat contains 50 equally spaced points between -5 and 5. The program sets all elements of therarray to 0, and then enters a nested loop structure. The outer loop iterates over the values ofain theaarray, and the next three loops iterate over the values ofxin thexarray for the three dimensions. For each value ofaandx, the program sets the first element of therarray to the current value ofx, calls thepsifunction to calculate the wave function, calls thee_locfunction to calculate the local energy, and then accumulates the energy and the normalization factor.At the end of the outer loop, the program calculates the final energy by dividing the accumulated energy by the accumulated normalization factor, and prints the value of

aand the corresponding energy.

2.3.2. Solution solution

- Python

#!/usr/bin/env python3 import numpy as np from hydrogen import e_loc, psi interval = np.linspace(-5,5,num=50) delta = (interval[1]-interval[0])**3 r = np.array([0.,0.,0.]) for a in [0.1, 0.2, 0.5, 0.9, 1., 1.5, 2.]: E = 0. normalization = 0. for x in interval: r[0] = x for y in interval: r[1] = y for z in interval: r[2] = z w = psi(a,r) w = w * w * delta E += w * e_loc(a,r) normalization += w E = E / normalization print(f"a = {a} \t E = {E}")

a = 0.1 E = -0.24518438948809218 a = 0.2 E = -0.26966057967803525 a = 0.5 E = -0.3856357612517407 a = 0.9 E = -0.49435709786716214 a = 1.0 E = -0.5 a = 1.5 E = -0.39242967082602226 a = 2.0 E = -0.08086980667844901

- Fortran

program energy_hydrogen implicit none double precision, external :: e_loc, psi double precision :: x(50), w, delta, energy, dx, r(3), a(6), normalization integer :: i, k, l, j a = (/ 0.1d0, 0.2d0, 0.5d0, 1.d0, 1.5d0, 2.d0 /) dx = 10.d0/(size(x)-1) do i=1,size(x) x(i) = -5.d0 + (i-1)*dx end do delta = dx**3 r(:) = 0.d0 do j=1,size(a) energy = 0.d0 normalization = 0.d0 do i=1,size(x) r(1) = x(i) do k=1,size(x) r(2) = x(k) do l=1,size(x) r(3) = x(l) w = psi(a(j),r) w = w * w * delta energy = energy + w * e_loc(a(j), r) normalization = normalization + w end do end do end do energy = energy / normalization print *, 'a = ', a(j), ' E = ', energy end do end program energy_hydrogen

a = 0.10000000000000001 E = -0.24518438948809140 a = 0.20000000000000001 E = -0.26966057967803236 a = 0.50000000000000000 E = -0.38563576125173815 a = 1.0000000000000000 E = -0.50000000000000000 a = 1.5000000000000000 E = -0.39242967082602065 a = 2.0000000000000000 E = -8.0869806678448772E-002

- C

#include <stdio.h> #include <math.h> #include "hydrogen.h" #define NPOINTS 50 #define NEXPO 6 int main() { double x[NPOINTS], energy, dx, r[3], delta, normalization, w; double a[NEXPO] = { 0.1, 0.2, 0.5, 1.0, 1.5, 2.0 }; dx = 10.0/(NPOINTS-1); for (int i = 0; i < NPOINTS; i++) { x[i] = -5.0 + i*dx; } delta = dx*dx*dx; for (int i = 0; i < 3; i++) { r[i] = 0.0; } for (int j = 0; j < NEXPO; j++) { energy = 0.0; normalization = 0.0; for (int i = 0; i < NPOINTS; i++) { r[0] = x[i]; for (int k = 0; k < NPOINTS; k++) { r[1] = x[k]; for (int l = 0; l < NPOINTS; l++) { r[2] = x[l]; w = psi(a[j], r); w = w*w*delta; energy += w*e_loc(a[j], r); normalization += w; } } } energy = energy/normalization; printf("a = %f E = %f\n", a[j], energy); } }

a = 0.100000 E = -0.245184 a = 0.200000 E = -0.269661 a = 0.500000 E = -0.385636 a = 1.000000 E = -0.500000 a = 1.500000 E = -0.392430 a = 2.000000 E = -0.080870

2.4. Variance of the local energy

The variance of the local energy is a functional of \(\Psi\) which measures the magnitude of the fluctuations of the local energy associated with \(\Psi\) around its average:

\[ \sigma^2(E_L) = \frac{\int |\Psi(\mathbf{r})|^2\, \left[ E_L(\mathbf{r}) - E \right]^2 \, d\mathbf{r}}{\int |\Psi(\mathbf{r}) |^2 d\mathbf{r}} \] which can be simplified as

\[ \sigma^2(E_L) = \langle E_L^2 \rangle_{\Psi^2} - \langle E_L \rangle_{\Psi^2}^2.\]

If the local energy is constant (i.e. \(\Psi\) is an eigenfunction of \(\hat{H}\)) the variance is zero, so the variance of the local energy can be used as a measure of the quality of a wave function.

2.4.1. Exercise (optional)

Prove that : \[\langle \left( E - \langle E \rangle_{\Psi^2} \right)^2\rangle_{\Psi^2} = \langle E^2 \rangle_{\Psi^2} - \langle E \rangle_{\Psi^2}^2 \]

2.4.2. Solution solution

\(\bar{E} = \langle E \rangle\) is a constant, so \(\langle \bar{E} \rangle = \bar{E}\) .

\begin{eqnarray*} \langle (E - \bar{E})^2 \rangle & = & \langle E^2 - 2 E \bar{E} + \bar{E}^2 \rangle \\ &=& \langle E^2 \rangle - 2 \langle E \bar{E} \rangle + \langle \bar{E}^2 \rangle \\ &=& \langle E^2 \rangle - 2 \langle E \rangle \bar{E} + \bar{E}^2 \\ &=& \langle E^2 \rangle - 2 \bar{E}^2 + \bar{E}^2 \\ &=& \langle E^2 \rangle - \bar{E}^2 \\ &=& \langle E^2 \rangle - \langle E \rangle^2 \\ \end{eqnarray*}2.4.3. Exercise

Add the calculation of the variance to the previous code, and compute a numerical estimate of the variance of the local energy using a grid of \(50\times50\times50\) points in the range \((-5,-5,-5) \le \mathbf{r} \le (5,5,5)\) for different values of \(a\).

- Python

#!/usr/bin/env python3 import numpy as np from hydrogen import e_loc, psi interval = np.linspace(-5,5,num=50) delta = (interval[1]-interval[0])**3 r = np.array([0.,0.,0.]) for a in [0.1, 0.2, 0.5, 0.9, 1., 1.5, 2.]: # TODO print(f"a = {a} \t E = {E:10.8f} \t \sigma^2 = {s2:10.8f}")

- Fortran

program variance_hydrogen implicit none double precision :: x(50), w, delta, energy, energy2 double precision :: dx, r(3), a(6), normalization, e_tmp, s2 integer :: i, k, l, j double precision, external :: e_loc, psi a = (/ 0.1d0, 0.2d0, 0.5d0, 1.d0, 1.5d0, 2.d0 /) dx = 10.d0/(size(x)-1) do i=1,size(x) x(i) = -5.d0 + (i-1)*dx end do do j=1,size(a) ! TODO print *, 'a = ', a(j), ' E = ', energy, ' s2 = ', s2 end do end program variance_hydrogen

To compile and run:

gfortran hydrogen.F90 variance_hydrogen.F90 -o variance_hydrogen ./variance_hydrogen

- C

#include <stdio.h> #include <math.h> #include "hydrogen.h" #define NPOINTS 50 #define NEXPO 6 int main() { double x[NPOINTS], energy, dx, r[3], delta, normalization, w; double a[NEXPO] = { 0.1, 0.2, 0.5, 1.0, 1.5, 2.0 }; double energy2, e_tmp, s2; dx = 10.0/(NPOINTS-1); for (int i = 0; i < NPOINTS; i++) { x[i] = -5.0 + i*dx; } for (int j = 0; j < NEXPO; j++) { // TODO printf("a = %f E = %f s2 = %f\n", a[j], energy, s2); } }

To compile and run:

gcc hydrogen.c variance_hydrogen.c -lm -o variance_hydrogen ./variance_hydrogen

2.4.4. Solution solution

- Python

#!/usr/bin/env python3 import numpy as np from hydrogen import e_loc, psi interval = np.linspace(-5,5,num=50) delta = (interval[1]-interval[0])**3 r = np.array([0.,0.,0.]) for a in [0.1, 0.2, 0.5, 0.9, 1., 1.5, 2.]: E = 0. E2 = 0. normalization = 0. for x in interval: r[0] = x for y in interval: r[1] = y for z in interval: r[2] = z w = psi(a,r) w = w * w * delta e_tmp = e_loc(a,r) E += w * e_tmp E2 += w * e_tmp * e_tmp normalization += w E = E / normalization E2 = E2 / normalization s2 = E2 - E**2 print(f"a = {a} \t E = {E:10.8f} \t \sigma^2 = {s2:10.8f}")

a = 0.1 E = -0.24518439 \sigma^2 = 0.02696522 a = 0.2 E = -0.26966058 \sigma^2 = 0.03719707 a = 0.5 E = -0.38563576 \sigma^2 = 0.05318597 a = 0.9 E = -0.49435710 \sigma^2 = 0.00577812 a = 1.0 E = -0.50000000 \sigma^2 = 0.00000000 a = 1.5 E = -0.39242967 \sigma^2 = 0.31449671 a = 2.0 E = -0.08086981 \sigma^2 = 1.80688143

- Fortran

program variance_hydrogen implicit none double precision :: x(50), w, delta, energy, energy2 double precision :: dx, r(3), a(6), normalization, e_tmp, s2 integer :: i, k, l, j double precision, external :: e_loc, psi a = (/ 0.1d0, 0.2d0, 0.5d0, 1.d0, 1.5d0, 2.d0 /) dx = 10.d0/(size(x)-1) do i=1,size(x) x(i) = -5.d0 + (i-1)*dx end do delta = dx**3 r(:) = 0.d0 do j=1,size(a) energy = 0.d0 energy2 = 0.d0 normalization = 0.d0 do i=1,size(x) r(1) = x(i) do k=1,size(x) r(2) = x(k) do l=1,size(x) r(3) = x(l) w = psi(a(j),r) w = w * w * delta e_tmp = e_loc(a(j), r) energy = energy + w * e_tmp energy2 = energy2 + w * e_tmp * e_tmp normalization = normalization + w end do end do end do energy = energy / normalization energy2 = energy2 / normalization s2 = energy2 - energy*energy print *, 'a = ', a(j), ' E = ', energy, ' s2 = ', s2 end do end program variance_hydrogen

a = 0.10000000000000001 E = -0.24518438948809140 s2 = 2.6965218719722767E-002 a = 0.20000000000000001 E = -0.26966057967803236 s2 = 3.7197072370201284E-002 a = 0.50000000000000000 E = -0.38563576125173815 s2 = 5.3185967578480653E-002 a = 1.0000000000000000 E = -0.50000000000000000 s2 = 0.0000000000000000 a = 1.5000000000000000 E = -0.39242967082602065 s2 = 0.31449670909172917 a = 2.0000000000000000 E = -8.0869806678448772E-002 s2 = 1.8068814270846534

- C

#include <stdio.h> #include <math.h> #include "hydrogen.h" #define NPOINTS 50 #define NEXPO 6 int main() { double x[NPOINTS], energy, dx, r[3], delta, normalization, w; double a[NEXPO] = { 0.1, 0.2, 0.5, 1.0, 1.5, 2.0 }; double energy2, e_tmp, s2; dx = 10.0/(NPOINTS-1); for (int i = 0; i < NPOINTS; i++) { x[i] = -5.0 + i*dx; } delta = dx*dx*dx; for (int i = 0; i < 3; i++) { r[i] = 0.0; } for (int j = 0; j < NEXPO; j++) { energy = 0.0; energy2 = 0.0; normalization = 0.0; for (int i = 0; i < NPOINTS; i++) { r[0] = x[i]; for (int k = 0; k < NPOINTS; k++) { r[1] = x[k]; for (int l = 0; l < NPOINTS; l++) { r[2] = x[l]; w = psi(a[j], r); w = w*w*delta; e_tmp = e_loc(a[j], r); energy += w * e_tmp; energy2 += w * e_tmp * e_tmp; normalization += w; } } } energy = energy/normalization; energy2 = energy2/normalization; s2 = energy2 - energy*energy; printf("a = %f E = %f s2 = %f\n", a[j], energy, s2); } }

gcc hydrogen.c variance_hydrogen.c -lm -o variance_hydrogen ./variance_hydrogen

a = 0.100000 E = -0.245184 s2 = 0.026965 a = 0.200000 E = -0.269661 s2 = 0.037197 a = 0.500000 E = -0.385636 s2 = 0.053186 a = 1.000000 E = -0.500000 s2 = 0.000000 a = 1.500000 E = -0.392430 s2 = 0.314497 a = 2.000000 E = -0.080870 s2 = 1.806881

3. Variational Monte Carlo

Numerical integration with deterministic methods is very efficient in low dimensions. When the number of dimensions becomes large, instead of computing the average energy as a numerical integration on a grid, it is usually more efficient to use Monte Carlo sampling.

Moreover, Monte Carlo sampling will allow us to remove the bias due to the discretization of space, and compute a statistical confidence interval.

3.1. Computation of the statistical error

To compute the statistical error, you need to perform \(M\) independent Monte Carlo calculations. You will obtain \(M\) different estimates of the energy, which are expected to have a Gaussian distribution for large \(M\), according to the Central Limit Theorem.

The estimate of the energy is

\[ E = \frac{1}{M} \sum_{i=1}^M E_i \]

The variance of the average energies can be computed as

\[ \sigma^2 = \frac{1}{M-1} \sum_{i=1}^{M} (E_i - E)^2 \]

And the confidence interval is given by

\[ E \pm \delta E, \text{ where } \delta E = \frac{\sigma}{\sqrt{M}} \]

3.1.1. Exercise

Write a function returning the average and statistical error of an input array.

- Python

#!/usr/bin/env python3 from math import sqrt def ave_error(arr): #TODO return (average, error)

- Fortran

subroutine ave_error(x,n,ave,err) implicit none integer, intent(in) :: n double precision, intent(in) :: x(n) double precision, intent(out) :: ave, err ! TODO end subroutine ave_error

- C

#include <stdio.h> #include <math.h> #include <stddef.h> // for size_t void ave_error(double* x, size_t n, double *ave, double *err) { // TODO }

Hints if you are stuck

Write a subroutine called

ave_errorthat calculates the average and error of a given array of real numbers. The subroutine takes in three arguments: an arrayxof real numbers, an integernrepresenting the size of the array, and two output argumentsaveanderrrepresenting the average and error of the array, respectively.The subroutine starts by checking if the input integer

nis less than 1. If it is, the subroutine stops and prints an error message. Ifnis equal to 1, the subroutine sets the average to the first element of the array and the error to zero. Ifnis greater than 1, the subroutine calculates the average of the array by dividing the sum of the elements by the number of elements in the array. Then it calculates the variance of the array by taking the sum of the square of the difference between each element and the average and dividing byn-1. Finally, it calculates the error by taking the square root of the variance divided byn.

3.1.2. Solution solution

- Python

#!/usr/bin/env python3 from math import sqrt def ave_error(arr): M = len(arr) assert(M>0) if M == 1: average = arr[0] error = 0. else: average = sum(arr)/M variance = 1./(M-1) * sum( [ (x - average)**2 for x in arr ] ) error = sqrt(variance/M) return (average, error)

- Fortran

subroutine ave_error(x,n,ave,err) implicit none integer, intent(in) :: n double precision, intent(in) :: x(n) double precision, intent(out) :: ave, err double precision :: variance if (n < 1) then stop 'n<1 in ave_error' else if (n == 1) then ave = x(1) err = 0.d0 else ave = sum(x(:)) / dble(n) variance = sum((x(:) - ave)**2) / dble(n-1) err = dsqrt(variance/dble(n)) endif end subroutine ave_error

- C

#include <stddef.h> // for size_t void ave_error(double* x, size_t n, double *ave, double *err);

#include <stdio.h> #include <math.h> #include <stddef.h> // for size_t void ave_error(double* x, size_t n, double *ave, double *err) { double variance; if (n < 1) { printf("n<1 in ave_error\n"); return; } else if (n == 1) { *ave = x[0]; *err = 0.0; } else { double sum = 0.0; for (int i = 0; i < n; i++) { sum += x[i]; } *ave = sum / (double)n; variance = 0.0; for (int i = 0; i < n; i++) { double x2 = x[i] - *ave; variance += x2*x2; } variance = variance / (double)(n - 1); *err = sqrt(variance / (double)n); } }

3.2. Uniform sampling in the box

We will now perform our first Monte Carlo calculation to compute the energy of the hydrogen atom.

Consider again the expression of the energy

\begin{eqnarray*} E & = & \frac{\int E_L(\mathbf{r})|\Psi(\mathbf{r})|^2\,d\mathbf{r}}{\int |\Psi(\mathbf{r}) |^2 d\mathbf{r}}\,. \end{eqnarray*}Clearly, the square of the wave function is a good choice of probability density to sample but we will start with something simpler and rewrite the energy as

\begin{eqnarray*} E & = & \frac{\int E_L(\mathbf{r})\frac{|\Psi(\mathbf{r})|^2}{P(\mathbf{r})}P(\mathbf{r})\, \,d\mathbf{r}}{\int \frac{|\Psi(\mathbf{r})|^2 }{P(\mathbf{r})}P(\mathbf{r})d\mathbf{r}}\,. \end{eqnarray*}Here, we will sample a uniform probability \(P(\mathbf{r})\) in a cube of volume \(L^3\) centered at the origin:

\[ P(\mathbf{r}) = \frac{1}{L^3}\,, \]

and zero outside the cube.

One Monte Carlo run will consist of \(N_{\rm MC}\) Monte Carlo iterations. At every Monte Carlo iteration:

- Draw a random point \(\mathbf{r}_i\) in the box \((-5,-5,-5) \le (x,y,z) \le (5,5,5)\)

- Compute \(|\Psi(\mathbf{r}_i)|^2\) and accumulate the result in a

variable

normalization - Compute \(|\Psi(\mathbf{r}_i)|^2 \times E_L(\mathbf{r}_i)\), and accumulate the

result in a variable

energy

Once all the iterations have been computed, the run returns the average energy \(\bar{E}_k\) over the \(N_{\rm MC}\) iterations of the run.

To compute the statistical error, perform \(M\) independent runs. The final estimate of the energy will be the average over the \(\bar{E}_k\), and the variance of the \(\bar{E}_k\) will be used to compute the statistical error.

3.2.1. Exercise

Parameterize the wave function with \(a=1.2\). Perform 30 independent Monte Carlo runs (\(M\)), each with 100 000 Monte Carlo steps (\(N_{MC}\)). Store the final energies of each run and use this array to compute the average energy and the associated error bar (\(\delta E\)).

- Python

To draw a uniform random number in Python, you can use the

random.uniformfunction of Numpy.#!/usr/bin/env python3 from hydrogen import * from qmc_stats import * def MonteCarlo(a, nmax): # TODO a = 1.2 nmax = 100000 #TODO print(f"E = {E} +/- {deltaE}")

- Fortran

To draw a uniform random number in Fortran, you can use the

RANDOM_NUMBERsubroutine.When running Monte Carlo calculations, the number of steps is usually very large. We expect

nmaxto be possibly larger than 2 billion. You would need to use 8-byte integers (integer*8) to represent it, as well as the index of the current step. This would imply modifying also theave_errorfunction.subroutine uniform_montecarlo(a,nmax,energy) implicit none double precision, intent(in) :: a integer , intent(in) :: nmax double precision, intent(out) :: energy integer :: istep double precision :: normalization, r(3), w double precision, external :: e_loc, psi ! TODO end subroutine uniform_montecarlo program qmc implicit none double precision, parameter :: a = 1.2d0 integer , parameter :: nmax = 100000 integer , parameter :: nruns = 30 integer :: irun double precision :: X(nruns) double precision :: ave, err !TODO print *, 'E = ', ave, '+/-', err end program qmc

gfortran hydrogen.F90 qmc_stats.F90 qmc_uniform.F90 -o qmc_uniform ./qmc_uniform

- C

To draw a uniform random number in C, you can use:

drand48(), which is defined in thestdlib.hheader. To initialize randomly the generator, usesrand48(time(NULL))using thetimefunction fromtime.h.#include <stdlib.h> #include <math.h> #include <stdio.h> #include <stddef.h> // for size_t #include <time.h> #include "hydrogen.h" #include "qmc_stats.h" // for ave_error void uniform_montecarlo(double a, size_t nmax, double *energy) { // TODO } int main(void) { #define a 1.2 #define nmax 100000 #define nruns 30 srand48(time(NULL)); // TODO printf("E = %f +/- %f\n", ave, err); return 0; }

Hints if you are stuck

Write first a subroutine called

uniform_montecarlothat calculates the energy of the Hydrogen atom using the Monte Carlo method with a uniform distribution. The subroutine takes in three arguments: a real numbera, an integernmaxrepresenting the number of Monte Carlo steps, and an output argumentenergyrepresenting the calculated energy.The subroutine starts by initializing the energy and normalization factor to 0 and defines some variables such as

istep,normalization,randw. The subroutine also makes use of two external functions:e_locandpsiwhich were defined in previous examples.The subroutine then enters a loop that iterates for

nmaxtimes. On each iteration, the subroutine generates three random numbers between 0 and 1, and then uses these random numbers to calculate a random point in 3D space between -5 and 5. The subroutine then calls thepsifunction to calculate the wave function at that point and thee_locfunction to calculate the local energy at that point. The subroutine then accumulates the energy and normalization factor using the generated point and the results of thepsiande_locfunctions.At the end of the loop, the subroutine calculates the final energy by dividing the accumulated energy by the accumulated normalization factor.

Then, write a Fortran program called

qmcthat uses theuniform_montecarlosubroutine to estimate the energy of the Hydrogen atom using the Monte Carlo method. The program starts by defining some parameters:a,nmax, andnruns.The program then defines a variable

irunwhich is used as a counter in a loop, an arrayXof lengthnrunsto store the energies calculated by theuniform_montecarlosubroutine, and variablesaveanderrto store the average and error of the energies, respectively.The program then enters a loop that iterates for

nrunstimes. On each iteration, the program calls theuniform_montecarlosubroutine to calculate the energy of the Hydrogen atom and stores the result in theXarray.After the loop, the program calls the

ave_errorsubroutine to calculate the average and error of the energies stored in theXarray and assigns the results toaveanderrvariables respectively.Finally, the program prints the average and error of the energies.

3.2.2. Solution solution

- Python

#!/usr/bin/env python3 from hydrogen import * from qmc_stats import * def MonteCarlo(a, nmax): energy = 0. normalization = 0. for istep in range(nmax): r = np.random.uniform(-5., 5., (3)) f = psi(a,r) w = f*f energy += w * e_loc(a,r) normalization += w return energy / normalization a = 1.2 nmax = 100000 X = [MonteCarlo(a,nmax) for i in range(30)] E, deltaE = ave_error(X) print(f"E = {E} +/- {deltaE}")

E = -0.4793311279357434 +/- 0.002563797463053474

- Fortran

subroutine uniform_montecarlo(a,nmax,energy) implicit none double precision, intent(in) :: a integer*8 , intent(in) :: nmax double precision, intent(out) :: energy integer*8 :: istep double precision :: normalization, r(3), w, f double precision, external :: e_loc, psi energy = 0.d0 normalization = 0.d0 do istep = 1,nmax call random_number(r) r(:) = -5.d0 + 10.d0*r(:) f = psi(a,r) w = f*f energy = energy + w * e_loc(a,r) normalization = normalization + w end do energy = energy / normalization end subroutine uniform_montecarlo program qmc implicit none double precision, parameter :: a = 1.2d0 integer*8 , parameter :: nmax = 100000 integer , parameter :: nruns = 30 integer :: irun double precision :: X(nruns) double precision :: ave, err do irun=1,nruns call uniform_montecarlo(a, nmax, X(irun)) enddo call ave_error(X, nruns, ave, err) print *, 'E = ', ave, '+/-', err end program qmc

E = -0.47958062416368030 +/- 3.1460315707685389E-003

- C

#include <stdlib.h> #include <math.h> #include <stdio.h> #include <time.h> #include <stddef.h> // for size_t #include "hydrogen.h" #include "qmc_stats.h" // for ave_error void uniform_montecarlo(double a, size_t nmax, double *energy) { size_t istep; double normalization, r[3], w, f; *energy = 0.0; normalization = 0.0; for (istep = 0; istep < nmax; istep++) { for (int i = 0; i < 3; i++) { r[i] = drand48(); } r[0] = -5.0 + 10.0 * r[0]; r[1] = -5.0 + 10.0 * r[1]; r[2] = -5.0 + 10.0 * r[2]; f = psi(a, r); w = f*f; *energy += w * e_loc(a, r); normalization += w; } *energy = *energy / normalization; } int main(void) { #define a 1.2 #define nmax 100000 #define nruns 30 double X[nruns]; double ave, err; srand48(time(NULL)); for (size_t irun = 0; irun < nruns; irun++) { uniform_montecarlo(a, nmax, &X[irun]); } ave_error(X, nruns, &ave, &err); printf("E = %f +/- %f\n", ave, err); return 0; }

E = -0.479507 +/- 0.001972

3.3. Metropolis sampling with Ψ2

We will now use the square of the wave function to sample random points distributed with the probability density \[ P(\mathbf{r}) = \frac{|\Psi(\mathbf{r})|^2}{\int |\Psi(\mathbf{r})|^2 d\mathbf{r}}\,. \]

The expression of the average energy is now simplified as the average of the local energies, since the weights are taken care of by the sampling:

\[ E \approx \frac{1}{N_{\rm MC}}\sum_{i=1}^{N_{\rm MC}} E_L(\mathbf{r}_i)\,. \]

To sample a chosen probability density, an efficient method is the Metropolis-Hastings sampling algorithm. Starting from a random initial position \(\mathbf{r}_0\), we will realize a random walk:

\[ \mathbf{r}_0 \rightarrow \mathbf{r}_1 \rightarrow \mathbf{r}_2 \ldots \rightarrow \mathbf{r}_{N_{\rm MC}}\,, \]

according to the following algorithm.

At every step, we propose a new move according to a transition probability \(T(\mathbf{r}_{n}\rightarrow\mathbf{r}_{n+1})\) of our choice.

For simplicity, we will move the electron in a 3-dimensional box of side \(2\delta L\) centered at the current position of the electron:

\[ \mathbf{r}_{n+1} = \mathbf{r}_{n} + \delta L \, \mathbf{u} \]

where \(\delta L\) is a fixed constant, and \(\mathbf{u}\) is a uniform random number in a 3-dimensional box \((-1,-1,-1) \le \mathbf{u} \le (1,1,1)\).

After having moved the electron, we add the accept/reject step that guarantees that the distribution of the \(\mathbf{r}_n\) is \(\Psi^2\). This amounts to accepting the move with probability

\[ A(\mathbf{r}_{n}\rightarrow\mathbf{r}_{n+1}) = \min\left(1,\frac{T(\mathbf{r}_{n+1}\rightarrow\mathbf{r}_{n}) P(\mathbf{r}_{n+1})}{T(\mathbf{r}_{n}\rightarrow\mathbf{r}_{n+1})P(\mathbf{r}_{n})}\right)\,, \]

which, for our choice of transition probability, becomes

\[ A(\mathbf{r}_{n}\rightarrow\mathbf{r}_{n+1}) = \min\left(1,\frac{P(\mathbf{r}_{n+1})}{P(\mathbf{r}_{n})}\right)= \min\left(1,\frac{|\Psi(\mathbf{r}_{n+1})|^2}{|\Psi(\mathbf{r}_{n})|^2}\right)\,. \]

Explain why the transition probability cancels out in the expression of \(A\).

Also note that we do not need to compute the norm of the wave function!

The algorithm is summarized as follows:

- Evaluate the local energy at \(\mathbf{r}_n\) and add it to an accumulator

- Compute a new position \(\mathbf{r'} = \mathbf{r}_n + \delta L\, \mathbf{u}\)

- Evaluate \(\Psi(\mathbf{r}')\) at the new position

- Compute the ratio \(A = \frac{\left|\Psi(\mathbf{r'})\right|^2}{\left|\Psi(\mathbf{r}_{n})\right|^2}\)

- Draw a uniform random number \(v \in [0,1]\)

- if \(v \le A\), accept the move : set \(\mathbf{r}_{n+1} = \mathbf{r'}\)

- else, reject the move : set \(\mathbf{r}_{n+1} = \mathbf{r}_n\)

A common error is to remove the rejected samples from the calculation of the average. Don't do it!

All samples should be kept, from both accepted and rejected moves.

3.3.1. Optimal step size

If the box is infinitely small, the ratio will be very close to one and all the steps will be accepted. However, the moves will be very correlated and you will explore the configurational space very slowly.

On the other hand, if you propose too large moves, the number of accepted steps will decrease because the ratios might become small. If the number of accepted steps is close to zero, then the space is not well sampled either.

The size of the move should be adjusted so that it is as large as possible, keeping the number of accepted steps not too small. To achieve that, we define the acceptance rate as the number of accepted steps over the total number of steps. Adjusting the time step such that the acceptance rate is close to 0.5 is a good compromise for the current problem.

Below, we use the symbol \(\delta t\) to denote \(\delta L\) since we will use the same variable later on to store a time step.

3.3.2. Exercise

Modify the program of the previous section to compute the energy, sampled with \(\Psi^2\).

Compute also the acceptance rate, so that you can adapt the time step in order to have an acceptance rate close to 0.5.

Can you observe a reduction in the statistical error?

- Python

#!/usr/bin/env python3 from hydrogen import * from qmc_stats import * def MonteCarlo(a,nmax,dt): # TODO return energy/nmax, N_accep/nmax # Run simulation a = 1.2 nmax = 100000 dt = #TODO X0 = [ MonteCarlo(a,nmax,dt) for i in range(30)] # Energy X = [ x for (x, _) in X0 ] E, deltaE = ave_error(X) print(f"E = {E} +/- {deltaE}") # Acceptance rate X = [ x for (_, x) in X0 ] A, deltaA = ave_error(X) print(f"A = {A} +/- {deltaA}")

- Fortran

subroutine metropolis_montecarlo(a,nmax,dt,energy,accep) implicit none double precision, intent(in) :: a integer*8 , intent(in) :: nmax double precision, intent(in) :: dt double precision, intent(out) :: energy double precision, intent(out) :: accep integer*8 :: istep integer*8 :: n_accep double precision :: r_old(3), r_new(3), psi_old, psi_new double precision :: v, ratio double precision, external :: e_loc, psi, gaussian ! TODO end subroutine metropolis_montecarlo program qmc implicit none double precision, parameter :: a = 1.2d0 double precision, parameter :: dt = ! TODO integer*8 , parameter :: nmax = 100000 integer , parameter :: nruns = 30 integer :: irun double precision :: X(nruns), Y(nruns) double precision :: ave, err do irun=1,nruns call metropolis_montecarlo(a,nmax,dt,X(irun),Y(irun)) enddo call ave_error(X,nruns,ave,err) print *, 'E = ', ave, '+/-', err call ave_error(Y,nruns,ave,err) print *, 'A = ', ave, '+/-', err end program qmc

- C

#include <stdio.h> #include <stdlib.h> #include <stddef.h> // for size_t #include <time.h> #include <math.h> #include "hydrogen.h" #include "qmc_stats.h" void metropolis_montecarlo(double a, size_t nmax, double dt, double *energy, double *accep) { // TODO } int main(void) { #define a 1.2 #define nmax 100000 #define dt //TODO #define nruns 30 double energy[nruns]; double accep[nruns]; double ave, err; srand48(time(NULL)); for (size_t irun = 0; irun < nruns; irun++) { metropolis_montecarlo(a, nmax, dt, energy, accep); } ave_error(energy, nruns, &ave, &err); printf("E = %f +/- %f\n", ave, err); ave_error(accep, nruns, &ave, &err); printf("A = %f +/- %f\n", ave, err); return 0; }

3.3.3. Solution solution

- Python

#!/usr/bin/env python3 from hydrogen import * from qmc_stats import * def MonteCarlo(a,nmax,dt): energy = 0. N_accep = 0 r_old = np.random.uniform(-dt, dt, (3)) psi_old = psi(a,r_old) for istep in range(nmax): energy += e_loc(a,r_old) r_new = r_old + np.random.uniform(-dt,dt,(3)) psi_new = psi(a,r_new) ratio = (psi_new / psi_old)**2 if np.random.uniform() <= ratio: N_accep += 1 r_old = r_new psi_old = psi_new return energy/nmax, N_accep/nmax # Run simulation a = 1.2 nmax = 100000 dt = 1.0 X0 = [ MonteCarlo(a,nmax,dt) for i in range(30)] # Energy X = [ x for (x, _) in X0 ] E, deltaE = ave_error(X) print(f"E = {E} +/- {deltaE}") # Acceptance rate X = [ x for (_, x) in X0 ] A, deltaA = ave_error(X) print(f"A = {A} +/- {deltaA}")

E = -0.4802595860693983 +/- 0.0005124420418289207 A = 0.5074913333333334 +/- 0.000350889422714878

- Fortran

subroutine metropolis_montecarlo(a,nmax,dt,energy,accep) implicit none double precision, intent(in) :: a integer*8 , intent(in) :: nmax double precision, intent(in) :: dt double precision, intent(out) :: energy double precision, intent(out) :: accep double precision :: r_old(3), r_new(3), psi_old, psi_new double precision :: v, ratio, u(3) integer*8 :: n_accep integer*8 :: istep double precision, external :: e_loc, psi, gaussian energy = 0.d0 n_accep = 0_8 call random_number(r_old) r_old(:) = dt * (2.d0*r_old(:) - 1.d0) psi_old = psi(a,r_old) do istep = 1,nmax energy = energy + e_loc(a,r_old) call random_number(u) r_new(:) = r_old(:) + dt*(2.d0*u(:) - 1.d0) psi_new = psi(a,r_new) ratio = (psi_new / psi_old)**2 call random_number(v) if (v <= ratio) then n_accep = n_accep + 1_8 r_old(:) = r_new(:) psi_old = psi_new endif end do energy = energy / dble(nmax) accep = dble(n_accep) / dble(nmax) end subroutine metropolis_montecarlo program qmc implicit none double precision, parameter :: a = 1.2d0 double precision, parameter :: dt = 1.0d0 integer*8 , parameter :: nmax = 100000 integer , parameter :: nruns = 30 integer :: irun double precision :: X(nruns), Y(nruns) double precision :: ave, err do irun=1,nruns call metropolis_montecarlo(a,nmax,dt,X(irun),Y(irun)) enddo call ave_error(X,nruns,ave,err) print *, 'E = ', ave, '+/-', err call ave_error(Y,nruns,ave,err) print *, 'A = ', ave, '+/-', err end program qmc

E = -0.48031509056922989 +/- 4.6803231523088529E-004 A = 0.50765333333333340 +/- 3.4368267017377720E-004

- C

#include <stdio.h> #include <stdlib.h> #include <stddef.h> // for size_t #include <math.h> #include <time.h> #include "hydrogen.h" #include "qmc_stats.h" void metropolis_montecarlo(double a, size_t nmax, double dt, double *energy, double *accep) { double r_old[3], r_new[3], psi_old, psi_new, v, ratio; size_t n_accep = 0; *energy = 0.0; for (int i = 0; i < 3; i++) { r_old[i] = dt * (2.0*drand48() - 1.0); } psi_old = psi(a, r_old); for (size_t istep = 0; istep < nmax; istep++) { *energy += e_loc(a, r_old); for (int i = 0; i < 3; i++) { r_new[i] = r_old[i] + dt * (2.0*drand48() - 1.0); } psi_new = psi(a, r_new); ratio = pow(psi_new / psi_old,2); v = drand48(); if (v <= ratio) { n_accep++; for (int i = 0; i < 3; i++) { r_old[i] = r_new[i]; } psi_old = psi_new; } } *energy = *energy / (double) nmax; *accep = (double) n_accep / (double) nmax; } int main(void) { #define a 1.2 #define nmax 100000 #define dt 1.0 #define nruns 30 double X[nruns]; double Y[nruns]; double ave, err; srand48(time(NULL)); for (size_t irun = 0; irun < nruns; irun++) { metropolis_montecarlo(a, nmax, dt, &X[irun], &Y[irun]); } ave_error(X, nruns, &ave, &err); printf("E = %f +/- %f\n", ave, err); ave_error(Y, nruns, &ave, &err); printf("A = %f +/- %f\n", ave, err); return 0; }

E = -0.479518 +/- 0.000466 A = 0.507560 +/- 0.000353

3.4. Generalized Metropolis algorithm

One can use more efficient numerical schemes to move the electrons by choosing a smarter expression for the transition probability.

The Metropolis acceptance step has to be adapted keeping in mind that the detailed balance condition is satisfied. This means that the acceptance probability \(A\) is chosen so that it is consistent with the probability of leaving \(\mathbf{r}_n\) and the probability of entering \(\mathbf{r}_{n+1}\):

\[ P(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1}) = A(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1}) T(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1}) = A(\mathbf{r}_{n+1} \rightarrow \mathbf{r}_{n}) T(\mathbf{r}_{n+1} \rightarrow \mathbf{r}_{n}) \frac{P(\mathbf{r}_{n+1})}{P(\mathbf{r}_{n})} \]

where \(T(\mathbf{r}_n \rightarrow \mathbf{r}_{n+1})\) is the probability of transition from \(\mathbf{r}_n\) to \(\mathbf{r}_{n+1}\) and \(P(\mathbf{r}_n \rightarrow \mathbf{r}_{n+1})\) is the conditional probability \(P(\mathbf{r}_n | \mathbf{r}_{n+1})\) and \(P(\mathbf{r}_n)\) is the probability of being in state \(\mathbf{r}_n\).

In the previous example, we were using uniform sampling in a box centered at the current position. Hence, the transition probability was symmetric

\[ T(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1}) = T(\mathbf{r}_{n+1} \rightarrow \mathbf{r}_{n}) = \text{constant}\,, \]

so the expression of \(A\) was simplified to the ratios of the squared wave functions.

Now, if instead of drawing uniform random numbers, we choose to draw Gaussian random numbers with zero mean and variance \(\delta t\), the transition probability becomes:

\[ T(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1}) = \frac{1}{(2\pi\,\delta t)^{3/2}} \exp \left[ - \frac{\left( \mathbf{r}_{n+1} - \mathbf{r}_{n} \right)^2}{2\delta t} \right]\,. \]

Furthermore, to sample the density even better, we can "push" the electrons into in the regions of high probability, and "pull" them away from the low-probability regions. This will increase the acceptance ratios and improve the sampling.

To do this, we can use the gradient of the probability density

\[ \frac{\nabla [ \Psi^2 ]}{\Psi^2} = 2 \frac{\nabla \Psi}{\Psi}\,, \]

and add the so-called drift vector, \(\frac{\nabla \Psi}{\Psi}\), so that the numerical scheme becomes a drifted diffusion with transition probability:

\[ T(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1}) = \frac{1}{(2\pi\,\delta t)^{3/2}} \exp \left[ - \frac{\left( \mathbf{r}_{n+1} - \mathbf{r}_{n} - \delta t\frac{\nabla \Psi(\mathbf{r}_n)}{\Psi(\mathbf{r}_n)} \right)^2}{2\,\delta t} \right]\,. \]

The corresponding move is proposed as

\[ \mathbf{r}_{n+1} = \mathbf{r}_{n} + \delta t\, \frac{\nabla \Psi(\mathbf{r})}{\Psi(\mathbf{r})} + \sqrt{\delta t}\, \chi \,, \]

where \(\chi\) is a Gaussian random variable with zero mean and variance 1. Multiplying by \(\sqrt{\delta t}\) makes the variance of the additional noise equal to \(\delta{t}\).

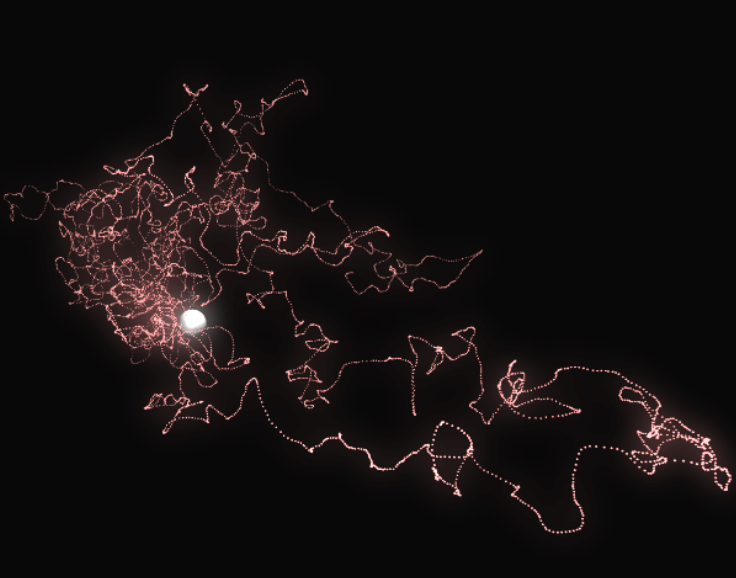

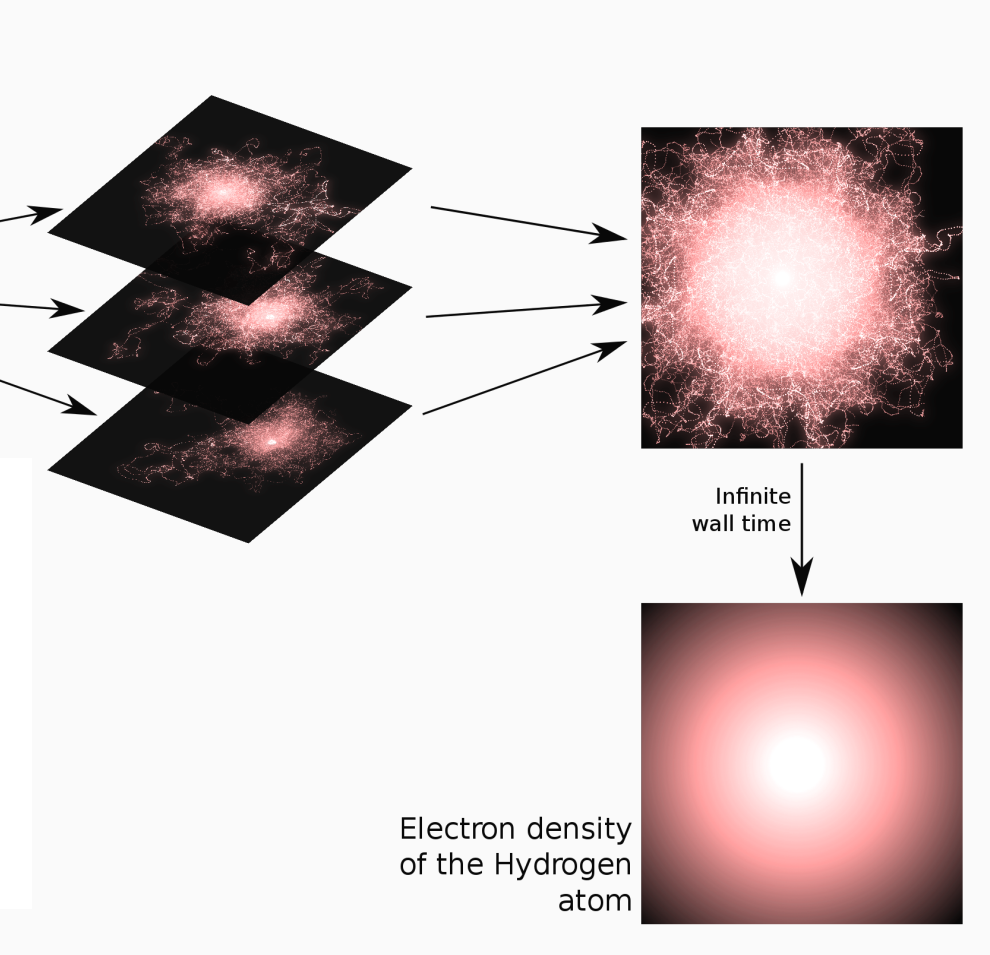

Here is an illustration of a trajectory:

Averaging all the trajectories converges to the density of the

hydrogen atom.

The algorithm of the previous exercise is only slightly modified as:

- Evaluate the local energy at \(\mathbf{r}_{n}\) and accumulate it

- Compute a new position \(\mathbf{r'} = \mathbf{r}_n + \delta t\, \frac{\nabla \Psi(\mathbf{r})}{\Psi(\mathbf{r})} + \sqrt{\delta t}\,\chi\)

- Evaluate \(\Psi(\mathbf{r}')\) and \(\frac{\nabla \Psi(\mathbf{r'})}{\Psi(\mathbf{r'})}\) at the new position

- Compute the ratio \(A = \frac{T(\mathbf{r}' \rightarrow \mathbf{r}_{n}) P(\mathbf{r}')}{T(\mathbf{r}_{n} \rightarrow \mathbf{r}') P(\mathbf{r}_{n})}\)

- Draw a uniform random number \(v \in [0,1]\)

- if \(v \le A\), accept the move : set \(\mathbf{r}_{n+1} = \mathbf{r'}\)

- else, reject the move : set \(\mathbf{r}_{n+1} = \mathbf{r}_n\)

3.4.1. Gaussian random number generator

To obtain Gaussian-distributed random numbers, you can apply the Box Muller transform to uniform random numbers:

\begin{eqnarray*} z_1 &=& \sqrt{-2 \ln u_1} \cos(2 \pi u_2) \\ z_2 &=& \sqrt{-2 \ln u_1} \sin(2 \pi u_2) \end{eqnarray*}This gives Gaussian-distributed random numbers with zero mean and a variance of one.

Below is a Fortran and a C implementation returning a

Gaussian-distributed n-dimensional vector \(\mathbf{z}\). This will

be useful for the following sections.

In Python, you can use the random.normal function of Numpy.

If you use Fortran of C, copy/paste the random_gauss function in

the qmc_stats.F90 or qmc_stats.c file.

- Fortran

subroutine random_gauss(z,n) implicit none integer, intent(in) :: n double precision, intent(out) :: z(n) double precision :: u(n+1) double precision, parameter :: two_pi = 2.d0*dacos(-1.d0) integer :: i call random_number(u) if (iand(n,1) == 0) then ! n is even do i=1,n,2 z(i) = dsqrt(-2.d0*dlog(u(i))) z(i+1) = z(i) * dsin( two_pi*u(i+1) ) z(i) = z(i) * dcos( two_pi*u(i+1) ) end do else ! n is odd do i=1,n-1,2 z(i) = dsqrt(-2.d0*dlog(u(i))) z(i+1) = z(i) * dsin( two_pi*u(i+1) ) z(i) = z(i) * dcos( two_pi*u(i+1) ) end do z(n) = dsqrt(-2.d0*dlog(u(n))) z(n) = z(n) * dcos( two_pi*u(n+1) ) end if end subroutine random_gauss

- C

void random_gauss(double* z, size_t n);

#include <stdlib.h> void random_gauss(double* z, size_t n) { double u[n+1]; double two_pi = 2.0 * acos(-1.0); size_t i; //generate random numbers for (i = 0; i <= n; i++) { u[i] = drand48(); } if (n % 2 == 0) { // n is even for (i = 0; i < n; i += 2) { z[i] = sqrt(-2.0 * log(u[i])); z[i+1] = z[i] * sin(two_pi * u[i+1]); z[i] = z[i] * cos(two_pi * u[i+1]); } } else { // n is odd for (i = 0; i < n-1; i += 2) { z[i] = sqrt(-2.0 * log(u[i])); z[i+1] = z[i] * sin(two_pi * u[i+1]); z[i] = z[i] * cos(two_pi * u[i+1]); } z[n-1] = sqrt(-2.0 * log(u[n-1])); z[n-1] = z[n-1] * cos(two_pi * u[n]); } }

3.4.2. Exercise 1

Write a function to compute the drift vector \(\frac{\nabla \Psi(\mathbf{r})}{\Psi(\mathbf{r})}\).

3.4.3. Solution solution

- Python

def drift(a,r): ar_inv = -a/np.sqrt(np.dot(r,r)) return r * ar_inv

- Fortran

subroutine drift(a,r,b) implicit none double precision, intent(in) :: a, r(3) double precision, intent(out) :: b(3) double precision :: ar_inv ar_inv = -a / dsqrt(r(1)*r(1) + r(2)*r(2) + r(3)*r(3)) b(:) = r(:) * ar_inv end subroutine drift

- C

void drift(double a, double r[3], double b[3]);

void drift(double a, double r[3], double b[3]) { double ar_inv = -a / sqrt(r[0]*r[0] + r[1]*r[1] + r[2]*r[2]); for (int i = 0; i < 3; i++) { b[i] = r[i] * ar_inv; } }

3.4.4. Exercise 2

Modify the previous program to introduce the drift-diffusion scheme. (This is a necessary step for the next section on diffusion Monte Carlo).

- Python

#!/usr/bin/env python3 from hydrogen import * from qmc_stats import * def MonteCarlo(a,nmax,dt): # TODO # Run simulation a = 1.2 nmax = 100000 dt = # TODO X0 = [ MonteCarlo(a,nmax,dt) for i in range(30)] # Energy X = [ x for (x, _) in X0 ] E, deltaE = ave_error(X) print(f"E = {E} +/- {deltaE}") # Acceptance rate X = [ x for (_, x) in X0 ] A, deltaA = ave_error(X) print(f"A = {A} +/- {deltaA}")

- Fortran

subroutine variational_montecarlo(a,nmax,dt,energy,accep) implicit none double precision, intent(in) :: a, dt integer*8 , intent(in) :: nmax double precision, intent(out) :: energy, accep integer*8 :: istep integer*8 :: n_accep double precision :: sq_dt, chi(3) double precision :: psi_old, psi_new double precision :: r_old(3), r_new(3) double precision :: d_old(3), d_new(3) double precision, external :: e_loc, psi ! TODO end subroutine variational_montecarlo program qmc implicit none double precision, parameter :: a = 1.2d0 double precision, parameter :: dt = ! TODO integer*8 , parameter :: nmax = 100000 integer , parameter :: nruns = 30 integer :: irun double precision :: X(nruns), accep(nruns) double precision :: ave, err do irun=1,nruns call variational_montecarlo(a,nmax,dt,X(irun),accep(irun)) enddo call ave_error(X,nruns,ave,err) print *, 'E = ', ave, '+/-', err call ave_error(accep,nruns,ave,err) print *, 'A = ', ave, '+/-', err end program qmc

gfortran hydrogen.F90 qmc_stats.F90 vmc_metropolis.F90 -o vmc_metropolis ./vmc_metropolis

- C

#include <stdio.h> #include <stdlib.h> #include <stddef.h> // for size_t #include <math.h> #include <time.h> #include "hydrogen.h" #include "qmc_stats.h" void variational_montecarlo(double a, size_t nmax, double dt, double *energy, double *accep) { // TODO } int main(void) { #define a 1.2 #define nmax 100000 #define dt // TODO #define nruns 30 double X[nruns]; double Y[nruns]; double ave, err; srand48(time(NULL)); for (size_t irun = 0; irun < nruns; irun++) { variational_montecarlo(a, nmax, dt, &X[irun], &Y[irun]); } ave_error(X, nruns, &ave, &err); printf("E = %f +/- %f\n", ave, err); ave_error(Y, nruns, &ave, &err); printf("A = %f +/- %f\n", ave, err); return 0; }

3.4.5. Solution solution

The ratio of transition probabilities can be expressed as:

\begin{eqnarray*} \frac{T(\mathbf{r}_{n} \rightarrow \mathbf{r}_{n+1})}{T(\mathbf{r}_{n+1} \rightarrow \mathbf{r}_{n})} & = & \frac{\exp \left[ - \frac{\left( \mathbf{r}_{n+1} - \mathbf{r}_{n} - \delta t\frac{\nabla \Psi(\mathbf{r}_n)}{\Psi(\mathbf{r}_n)} \right)^2}{2\,\delta t} \right] }{\exp \left[ - \frac{\left( \mathbf{r}_{n} - \mathbf{r}_{n+1} - \delta t\frac{\nabla \Psi(\mathbf{r}_{n+1})}{\Psi(\mathbf{r}_{n+1})} \right)^2}{2\,\delta t} \right]} \\ & = & \exp \left[ - \frac{\left( \mathbf{r}_{n+1} - \mathbf{r}_{n} - \delta t\frac{\nabla \Psi(\mathbf{r}_n)}{\Psi(\mathbf{r}_n)} \right)^2}{2\,\delta t} + \frac{\left( \mathbf{r}_{n} - \mathbf{r}_{n+1} - \delta t\frac{\nabla \Psi(\mathbf{r}_{n+1})}{\Psi(\mathbf{r}_{n+1})} \right)^2}{2\,\delta t} \right] \\ & = & \exp \left[ - \frac{1}{2\,\delta t} |\mathbf{r}_{n+1} - \mathbf{r}_{n}|^2 + (\mathbf{r}_{n+1} - \mathbf{r}_{n})\cdot \frac{\nabla \Psi(\mathbf{r}_n)}{\Psi(\mathbf{r}_n)} - \frac{1}{2}\delta t \left(\frac{\nabla \Psi(\mathbf{r}_n)}{\Psi(\mathbf{r}_n)}\right)^2 \right.\\ & & \left. + \frac{1}{2\,\delta t} |\mathbf{r}_{n} - \mathbf{r}_{n+1}|^2 - (\mathbf{r}_{n} - \mathbf{r}_{n+1})\cdot \frac{\nabla \Psi(\mathbf{r}_{n+1})}{\Psi(\mathbf{r}_{n+1})} + \frac{1}{2}\delta t \left(\frac{\nabla \Psi(\mathbf{r}_{n+1})}{\Psi(\mathbf{r}_{n+1})}\right)^2 \right] \\ & = & \exp \left[ (\mathbf{r}_{n+1} - \mathbf{r}_{n})\cdot \left( \frac{\nabla \Psi(\mathbf{r}_{n+1})}{\Psi(\mathbf{r}_{n+1})} + \frac{\nabla \Psi(\mathbf{r}_{n})}{\Psi(\mathbf{r}_{n})} \right) + \frac{1}{2}\delta t \left( \left(\frac{\nabla \Psi(\mathbf{r}_{n+1})}{\Psi(\mathbf{r}_{n+1})}\right)^2 - \left(\frac{\nabla \Psi(\mathbf{r}_{n})}{\Psi(\mathbf{r}_{n})}\right)^2 \right) \right] \end{eqnarray*}- Python

#!/usr/bin/env python3 from hydrogen import * from qmc_stats import * def MonteCarlo(a,nmax,dt): sq_dt = np.sqrt(dt) energy = 0. N_accep = 0 r_old = np.random.normal(loc=0., scale=1.0, size=(3)) d_old = drift(a,r_old) d2_old = np.dot(d_old,d_old) psi_old = psi(a,r_old) for istep in range(nmax): chi = np.random.normal(loc=0., scale=1.0, size=(3)) energy += e_loc(a,r_old) r_new = r_old + dt * d_old + sq_dt * chi d_new = drift(a,r_new) d2_new = np.dot(d_new,d_new) psi_new = psi(a,r_new) # Metropolis prod = np.dot((d_new + d_old), (r_new - r_old)) argexpo = 0.5 * (d2_new - d2_old)*dt + prod q = psi_new / psi_old q = np.exp(-argexpo) * q*q if np.random.uniform() <= q: N_accep += 1 r_old = r_new d_old = d_new d2_old = d2_new psi_old = psi_new return energy/nmax, N_accep/nmax # Run simulation a = 1.2 nmax = 100000 dt = 1.0 X0 = [ MonteCarlo(a,nmax,dt) for i in range(30)] # Energy X = [ x for (x, _) in X0 ] E, deltaE = ave_error(X) print(f"E = {E} +/- {deltaE}") # Acceptance rate X = [ x for (_, x) in X0 ] A, deltaA = ave_error(X) print(f"A = {A} +/- {deltaA}")

E = -0.48034171558629885 +/- 0.0005286038561061781 A = 0.6210380000000001 +/- 0.0005457375900937905

- Fortran

subroutine variational_montecarlo(a,nmax,dt,energy,accep) implicit none double precision, intent(in) :: a, dt integer*8 , intent(in) :: nmax double precision, intent(out) :: energy, accep integer*8 :: istep integer*8 :: n_accep double precision :: sq_dt, chi(3), d2_old, prod, u double precision :: psi_old, psi_new, d2_new, argexpo, q double precision :: r_old(3), r_new(3) double precision :: d_old(3), d_new(3) double precision, external :: e_loc, psi sq_dt = dsqrt(dt) ! Initialization energy = 0.d0 n_accep = 0_8 call random_gauss(r_old,3) call drift(a,r_old,d_old) d2_old = d_old(1)*d_old(1) + & d_old(2)*d_old(2) + & d_old(3)*d_old(3) psi_old = psi(a,r_old) do istep = 1,nmax energy = energy + e_loc(a,r_old) call random_gauss(chi,3) r_new(:) = r_old(:) + dt*d_old(:) + chi(:)*sq_dt call drift(a,r_new,d_new) d2_new = d_new(1)*d_new(1) + & d_new(2)*d_new(2) + & d_new(3)*d_new(3) psi_new = psi(a,r_new) ! Metropolis prod = (d_new(1) + d_old(1))*(r_new(1) - r_old(1)) + & (d_new(2) + d_old(2))*(r_new(2) - r_old(2)) + & (d_new(3) + d_old(3))*(r_new(3) - r_old(3)) argexpo = 0.5d0 * (d2_new - d2_old)*dt + prod q = psi_new / psi_old q = dexp(-argexpo) * q*q call random_number(u) if (u <= q) then n_accep = n_accep + 1_8 r_old(:) = r_new(:) d_old(:) = d_new(:) d2_old = d2_new psi_old = psi_new end if end do energy = energy / dble(nmax) accep = dble(n_accep) / dble(nmax) end subroutine variational_montecarlo program qmc implicit none double precision, parameter :: a = 1.2d0 double precision, parameter :: dt = 1.0d0 integer*8 , parameter :: nmax = 100000 integer , parameter :: nruns = 30 integer :: irun double precision :: X(nruns), accep(nruns) double precision :: ave, err do irun=1,nruns call variational_montecarlo(a,nmax,dt,X(irun),accep(irun)) enddo call ave_error(X,nruns,ave,err) print *, 'E = ', ave, '+/-', err call ave_error(accep,nruns,ave,err) print *, 'A = ', ave, '+/-', err end program qmc

E = -0.47965766050774800 +/- 5.0599992102209337E-004 A = 0.62055466666666670 +/- 4.3100330247376571E-004

- C

#include <stdio.h> #include <stdlib.h> #include <stddef.h> // for size_t #include <math.h> #include <time.h> #include "hydrogen.h" #include "qmc_stats.h" void variational_montecarlo(double a, size_t nmax, double dt, double *energy, double *accep) { double chi[3], d2_old, prod, u; double psi_old, psi_new, d2_new, argexpo, q; double r_old[3], r_new[3]; double d_old[3], d_new[3]; size_t istep, n_accep = 0; double sq_dt = sqrt(dt); // Initialization *energy = 0.0; random_gauss(r_old, 3); drift(a, r_old, d_old); d2_old = d_old[0]*d_old[0] + d_old[1]*d_old[1] + d_old[2]*d_old[2]; psi_old = psi(a, r_old); for (istep = 0; istep < nmax; istep++) { *energy += e_loc(a, r_old); random_gauss(chi, 3); for (int i = 0; i < 3; i++) { r_new[i] = r_old[i] + dt*d_old[i] + chi[i]*sq_dt; } drift(a, r_new, d_new); d2_new = d_new[0]*d_new[0] + d_new[1]*d_new[1] + d_new[2]*d_new[2]; psi_new = psi(a, r_new); // Metropolis prod = (d_new[0] + d_old[0])*(r_new[0] - r_old[0]) + (d_new[1] + d_old[1])*(r_new[1] - r_old[1]) + (d_new[2] + d_old[2])*(r_new[2] - r_old[2]); argexpo = 0.5 * (d2_new - d2_old)*dt + prod; q = psi_new / psi_old; q = exp(-argexpo) * q*q; u = drand48(); if (u <= q) { n_accep++; for (int i = 0; i < 3; i++) { r_old[i] = r_new[i]; d_old[i] = d_new[i]; } d2_old = d2_new; psi_old = psi_new; } } *energy = *energy / (double) nmax; *accep = (double) n_accep / (double) nmax; } int main(void) { #define a 1.2 #define nmax 100000 #define dt 1.0 #define nruns 30 double X[nruns]; double Y[nruns]; double ave, err; srand48(time(NULL)); for (size_t irun = 0; irun < nruns; irun++) { variational_montecarlo(a, nmax, dt, &X[irun], &Y[irun]); } ave_error(X, nruns, &ave, &err); printf("E = %f +/- %f\n", ave, err); ave_error(Y, nruns, &ave, &err); printf("A = %f +/- %f\n", ave, err); return 0; }

E = -0.479814 +/- 0.000552 A = 0.621853 +/- 0.000401

4. Diffusion Monte Carlo

As we have seen, Variational Monte Carlo is a powerful method to compute integrals in large dimensions. It is often used in cases where the expression of the wave function is such that the integrals can't be evaluated (multi-centered Slater-type orbitals, correlation factors, etc).

Diffusion Monte Carlo is different. It goes beyond the computation of the integrals associated with an input wave function, and aims at finding a near-exact numerical solution to the Schrödinger equation.

4.1. Schrödinger equation in imaginary time

Consider the time-dependent Schrödinger equation:

\[ i\frac{\partial \Psi(\mathbf{r},t)}{\partial t} = (\hat{H} -E_{\rm ref}) \Psi(\mathbf{r},t)\,. \]

where we introduced a shift in the energy, \(E_{\rm ref}\), for reasons which will become apparent below.

We can expand a given starting wave function, \(\Psi(\mathbf{r},0)\), in the basis of the eigenstates of the time-independent Hamiltonian, \(\Phi_k\), with energies \(E_k\):

\[ \Psi(\mathbf{r},0) = \sum_k a_k\, \Phi_k(\mathbf{r}). \]

The solution of the Schrödinger equation at time \(t\) is

\[ \Psi(\mathbf{r},t) = \sum_k a_k \exp \left( -i\, (E_k-E_{\rm ref})\, t \right) \Phi_k(\mathbf{r}). \]

Now, if we replace the time variable \(t\) by an imaginary time variable \(\tau=i\,t\), we obtain

\[ -\frac{\partial \psi(\mathbf{r}, \tau)}{\partial \tau} = (\hat{H} -E_{\rm ref}) \psi(\mathbf{r}, \tau) \]

where \(\psi(\mathbf{r},\tau) = \Psi(\mathbf{r},-i\,\tau)\) and

\begin{eqnarray*} \psi(\mathbf{r},\tau) &=& \sum_k a_k \exp( -(E_k-E_{\rm ref})\, \tau) \Phi_k(\mathbf{r})\\ &=& \exp(-(E_0-E_{\rm ref})\, \tau)\sum_k a_k \exp( -(E_k-E_0)\, \tau) \Phi_k(\mathbf{r})\,. \end{eqnarray*}For large positive values of \(\tau\), \(\psi\) is dominated by the \(k=0\) term, namely, the lowest eigenstate. If we adjust \(E_{\rm ref}\) to the running estimate of \(E_0\), we can expect that simulating the differential equation in imaginary time will converge to the exact ground state of the system.

4.2. Relation to diffusion

The diffusion equation of particles is given by

\[ \frac{\partial \psi(\mathbf{r},t)}{\partial t} = D\, \Delta \psi(\mathbf{r},t) \]

where \(D\) is the diffusion coefficient. When the imaginary-time Schrödinger equation is written in terms of the kinetic energy and potential,

\[ \frac{\partial \psi(\mathbf{r}, \tau)}{\partial \tau} = \left(\frac{1}{2}\Delta - [V(\mathbf{r}) -E_{\rm ref}]\right) \psi(\mathbf{r}, \tau)\,, \]

it can be identified as the combination of:

- a diffusion equation (Laplacian)

- an equation whose solution is an exponential (potential)

The diffusion equation can be simulated by a Brownian motion:

\[ \mathbf{r}_{n+1} = \mathbf{r}_{n} + \sqrt{\delta t}\, \chi \]

where \(\chi\) is a Gaussian random variable, and the potential term can be simulated by creating or destroying particles over time (a so-called branching process) or by simply considering it as a cumulative multiplicative weight along the diffusion trajectory (pure Diffusion Monte Carlo):

\[ \prod_i \exp \left( - (V(\mathbf{r}_i) - E_{\text{ref}}) \delta t \right). \]

We note that the ground-state wave function of a Fermionic system is antisymmetric and changes sign. Therefore, its interpretation as a probability distribution is somewhat problematic. In fact, mathematically, since the Bosonic ground state is lower in energy than the Fermionic one, for large \(\tau\), the system will evolve towards the Bosonic solution.

For the systems you will study, this is not an issue:

- Hydrogen atom: You only have one electron!

- Two-electron system (\(H_2\) or He): The ground-wave function is antisymmetric in the spin variables but symmetric in the space ones.

Therefore, in both cases, you are dealing with a "Bosonic" ground state.

4.3. Importance sampling

In a molecular system, the potential is far from being constant and, in fact, diverges at the inter-particle coalescence points. Hence, it results in very large fluctuations of the weight associated with the potential, making the calculations impossible in practice. Fortunately, if we multiply the Schrödinger equation by a chosen trial wave function \(\Psi_T(\mathbf{r})\) (Hartree-Fock, Kohn-Sham determinant, CI wave function, etc), one obtains

\[ -\frac{\partial \psi(\mathbf{r},\tau)}{\partial \tau} \Psi_T(\mathbf{r}) = \left[ -\frac{1}{2} \Delta \psi(\mathbf{r},\tau) + V(\mathbf{r}) \psi(\mathbf{r},\tau) \right] \Psi_T(\mathbf{r}) \]

Defining \(\Pi(\mathbf{r},\tau) = \psi(\mathbf{r},\tau) \Psi_T(\mathbf{r})\), (see appendix for details)

\[ -\frac{\partial \Pi(\mathbf{r},\tau)}{\partial \tau} = -\frac{1}{2} \Delta \Pi(\mathbf{r},\tau) + \nabla \left[ \Pi(\mathbf{r},\tau) \frac{\nabla \Psi_T(\mathbf{r})}{\Psi_T(\mathbf{r})} \right] + (E_L(\mathbf{r})-E_{\rm ref})\Pi(\mathbf{r},\tau) \]

The new "kinetic energy" can be simulated by the drift-diffusion scheme presented in the previous section (VMC). The new "potential" is the local energy, which has smaller fluctuations when \(\Psi_T\) gets closer to the exact wave function. This term can be simulated by \[ \prod_i \exp \left( - (E_L(\mathbf{r}_i) - E_{\text{ref}}) \delta t \right). \] where \(E_{\rm ref}\) is the constant we had introduced above, which is adjusted to an estimate of the average energy to keep the weights close to one.

This equation generates the N-electron density \(\Pi\), which is the product of the ground state solution with the trial wave function. You may then ask: how can we compute the total energy of the system?

To this aim, we use the mixed estimator of the energy: